Interpretable Discovery in Large Image Data Sets

| Kiri Wagstaff |

|

Jet Propulsion Laboratory, California Institute of Technology |

| Jake Lee |

| Columbia University |

Abstract

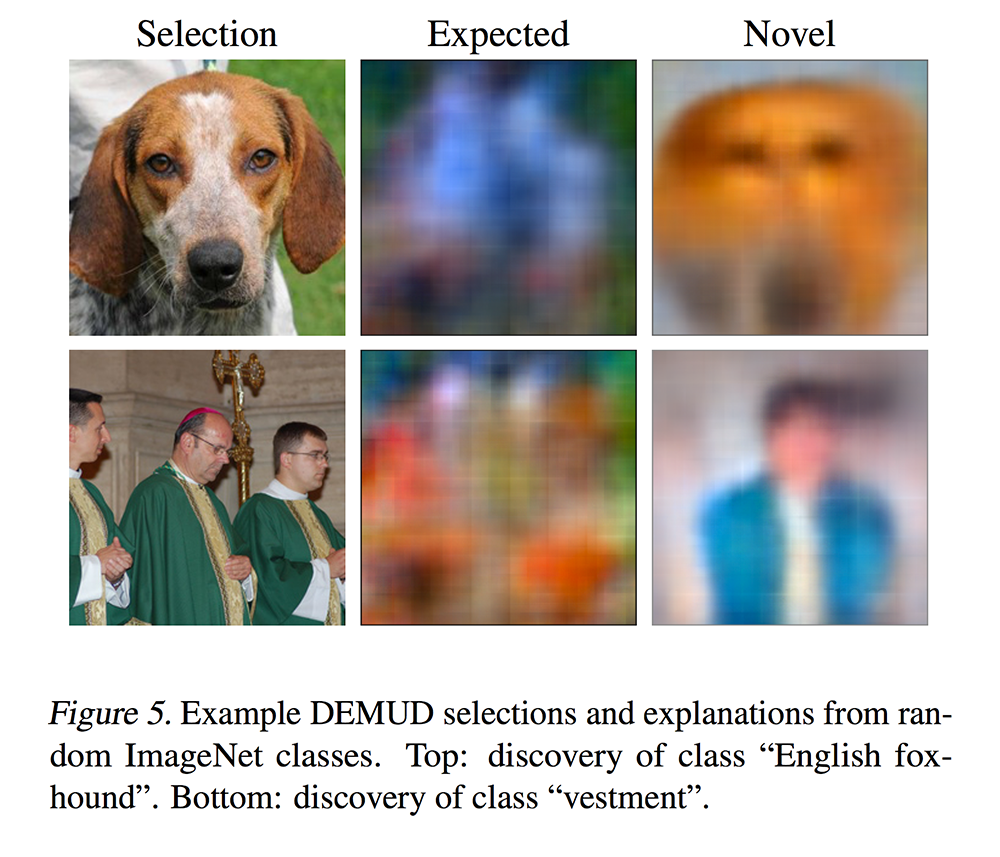

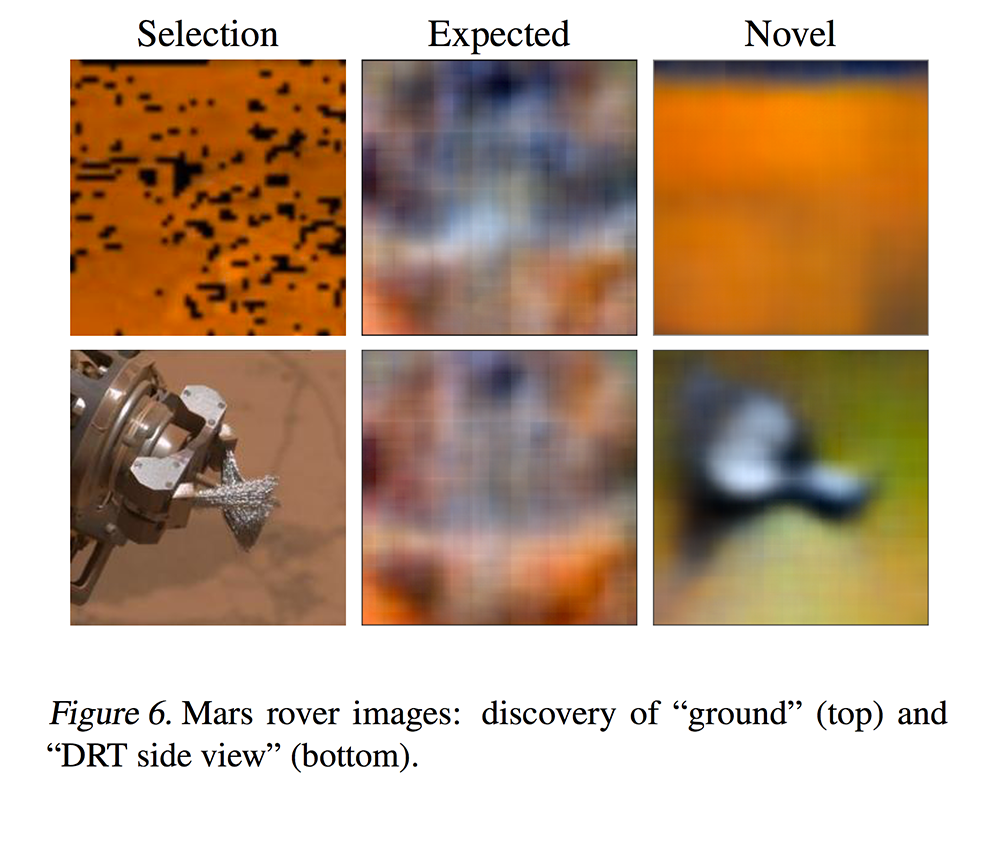

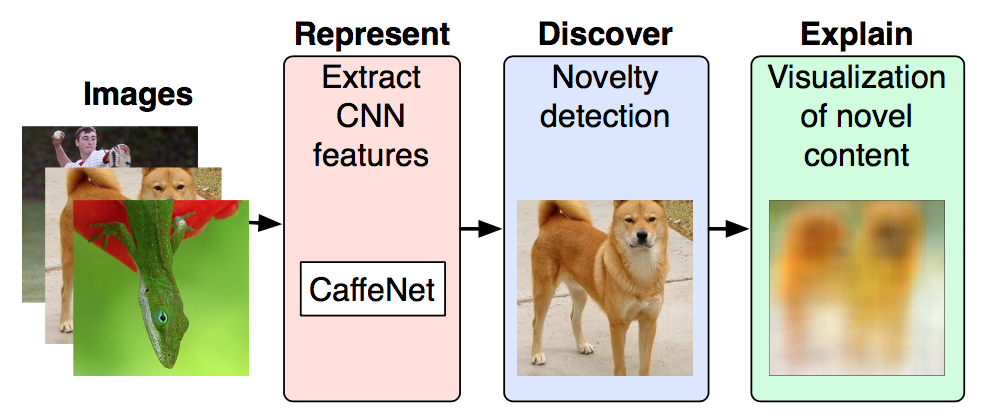

Automated detection of new, interesting, unusual, or anomalous images within large data sets has great value for applications from surveillance (e.g., airport security) to science (observations that don’t fit a given theory can lead to new discoveries). Many image data analysis systems are turning to convolutional neural networks (CNNs) to represent image content due to their success in achieving high classification accuracy rates. However, CNN representations are notoriously difficult for humans to interpret. We describe a new strategy that combines novelty detection with CNN image features to achieve rapid discovery with interpretable explanations of novel image content. We applied this technique to familiar images from ImageNet as well as to a scientific image collection from planetary science.

Code & Data

- DEMUD

- [GitHub repository]

- Supplemental scripts and ImageNet data

- [GitHub repository]

- Dosovitskiy and Brox visualization

- [Source]

- Mars image data set

- [Zenodo]

Paper

Interpretable Discovery in Large Image Data Sets

In WHI 2018

[link to paper]

Presentation

Acknowledgements

We thank the Planetary Data System Imaging Node for funding this project. Part of this research was carried out at the Jet Propulsion Laboratory, California Institute of Technology, under a contract with the National Aeronautics and Space Administration.